VF Corporation /

Redesigning Returns CX

The VF returns experience across brands is outdated compared to competitors. From an analytics perspective, data shows that returns are the #1 reasons for calls to customer support teams; from a brand perspective, there is inconsistency related to initiation points; from a regional point of view, there are restrictions to be cognizant of from NORA (North America) versus EMEA (Europe, Middle East, Asia).

I was tasked with tackling one of the biggest problems in the e-commerce space: redesigning the returns experience for some of the top brands in the world, like The North Face, Vans, Dickies, and Timberland. To do this, I partnered up with 2 other researchers to combine efforts, conduct research, and generate a customer journey map to align our brand partners on what an optimal returns experience looks like.

MY ROLE

Lead researcher

Project planning

Mentoring juniors

Synthesis

Deliverables

METHODS

Moderated interviews

Gap analysis

Contextual investigation

Journey mapping

Wireframing

COLLABORATORS

2 members of the Customer Experience (CX) team

TIMELINE

4 months

Spoilers

With collaboration from the CX and research team, we were able to provide impact and a direction to the business for how we can improve our returns experience. These included…

Now having a definition of a “frictionless” return experience which was unanimous regardless of brand loyalty

or regionOur largest pain points in the current VF experience are related to return initiation and refund delays, and with our research artifacts we paved the way for a more accessible and competitive experience

We identified several inconsistencies within messaging and timely communication with customers across brands which are impacting customer perception, which we now had budget to solve

I. BACKGROUND

Understanding the gaps

Before jumping into this project, it was clear that many departments had attempted to improve the returns experience at VF, but none of them were successful. As a result of those efforts, there was a large amount of historical research to sift through. To ensure that we didn’t duplicate efforts, myself and had to first understand what we know vs. what we don’t know about this space, by reviewing and analysis all past work.

Research objectives

After completing the gap analysis, we knew which areas to prioritize based on the level of impact vs. the level of effort needed for success. Multiple streams popped up as opportunities for us to consider, and with collaboration with my teammates, we felt confident about how to proceed and what we wanted to learn.

The headline was that we needed to conduct brand agnostic CX discovery work focusing on the returns experience by…

Facilitating multiple sessions of moderated user studies to better understand differing perspectives of a “frictionless” returns experience

Evaluating competitors in multiple forms of gap analysis, contextual investigation, and trend work

Delivering an in-depth journey map of the current state VF returns journey to align brand partners and act as a north star for future roadmaps

Streams of research

As this project touched on multiple brands, it was important to conduct simultaneous streams of research in 10 weeks to gather the most information. This included…

Consumer interviews with customers who shop at competitor brands

Consumer interviews with customers who shop at VF brands

Contextual investigation

Internal interviews with customer service representatives

Survey(s) sent through UserTesting to pair quantitative data with qualitative

II. METHODOLOGY

Moderated interviews

A moderated user study is a one-on-one conversation with a participant. This method is used when we want to learn about user habits, behaviors, and emotions.

What is a “good” returns experience?

What is a “bad” returns experience?

What are the highs and lows of their experience

What are their expected timelines around brand communication, return windows, and refund timeline

Our participants were customers from North America and Europe, since VF is a global brand, split into segments of:

VF consumers / NORA

Focused on understanding general perceptions and experiences from customers from North America who shopped at the top 4 VF brands on a regular cadence. This was determined by our screener where we set parameters of geographic location, but also tried to get a general understanding of how people returned, whether online or in-store, as well as their general experience when returning in their selected method.

VF consumers / EMEA

Feedback and insights from the first study were mostly both positive and included more experiences with returns in-store than online. Though those insights were helpful to understand, we found ourselves wanting more data specifically based on negative return perspectives from customers who shipped their item back, and saw this second study as an opportunity to do just that.

Lastly, our EMEA base is also interesting due to the fact that the UK is the largest European market for purchases from VF brands, while Germany is the largest market for returns from VF brands. We essentially took it upon ourselves to now add demographic quotas based on return method, experience rating, and region.

Gen pop / NORA

The third study, which was ran very quickly after, Was now the first round of our testing with NORA gen pop. We defined gen pop as “frequent and familiar” online shoppers at competitor brands. And the reason we were now switching to gen pop studies was because of the lack of true user sentiment to match the data we had on competitor return processes - we were interested in what competitors have been doing in this digital transformation phase post-pandemic as well as how their customers were dealing with them, and figured that gathering insights from loyal shoppers to those brands would help us further self-identify where we stand in the race. We again set quotas based on learnings from the second study set for return method and experience rating associated with that.

Gen pop / EMEA

The fourth and last study was the EMEA version of study 3, with gen pop. We did switch a few competitor brands in our screener to be more localized to the EMEA region, but for the most part, those brands are also internationally competitive to VF. The only added layer of demographic here was again based around a split of participants from the UK and Germany, as those are our two most popular regions for buying and returning.

III. SYNTHESIS

Process

As studies were ran and feedback was rolling in at a quick pace, I had to be strategic about when and how I synthesized all of it. Thankfully, I had the help of a few different tools at this time including UserTesting, Excel, and Mural.

UserTesting.com was my first step for raw data collection, but in this instance, it was used as a supplement to the Word doc templates we had created since that’s where we were gathering the true qualitative notes.

The second tool I use is Microsoft Excel. Excel is where I transport the raw data into the various templates I’ve set in there, along with additional notes taken during the interview. Apart from moderated studies, I have provided separate templates that we’ve used for qualitative and quantitative synthesis for unmoderated studies, so Excel is usually the holy grail for the research team.

Lastly, I use Mural or any digital white boarding tool for data organization, mid fidelity presentations, clustering and affinity mapping, as well as overall collaboration with designers, product managers, and the business. I think it’s always nice turning complicated data into a visually appealing piece to show off.

The data I gather into Mural is usually ready-to-be presented, but if it’s needed to be more formalized, it’s easy to drag and drop different pieces or synopses into a standard slide deck.

IV. FINDINGS

Top findings

From our customer interviews, six key findings emerged related to our customer needs and expectations:

Seamless member experience: The customer expects to start a return through the order history and to be able to track their return via a tracking feature on their account.

Email communications: Customers expect to be informed of their packages’ return milestones and any delays.

Return milestones: Testers agreed that they would be liked to informed on: when they start a return (usually this emails contains the return label), when the package is received by the carrier or distribution center, when the package/return is accepted, and when their refund is on the way

Completion of process: A return is complete only when they receive a refund

Refund timelines: Expected refund timeline varied depending on return method. 3 days for in-person return and ~2 weeks for carrier return

Return instructions: Return instructions should be easily readable, scannable, and available in as many areas as possible

V. COMPETITIVE EVALUATION

Building on insights

Following our synthesis of the moderated interviews, my junior partner on the team was tasked with completing a competitive evaluation that I would help guide and consult on. This included buying and returning items from…

Amazon

Asos

Lululemon

Nike

Adidas

VF Brands: The North Face, Vans, Dickies, Timberland

After doing so, she was to document each brands procedures and process in packaging instructions, email communication, and online portal capabilities, resulting in a helpful visualization we created together.

In addition, we created an end-to-end timeline representing how long it took to receive the refund from each brand. This helped us understand where we stood in comparison to our industry benchmarks.

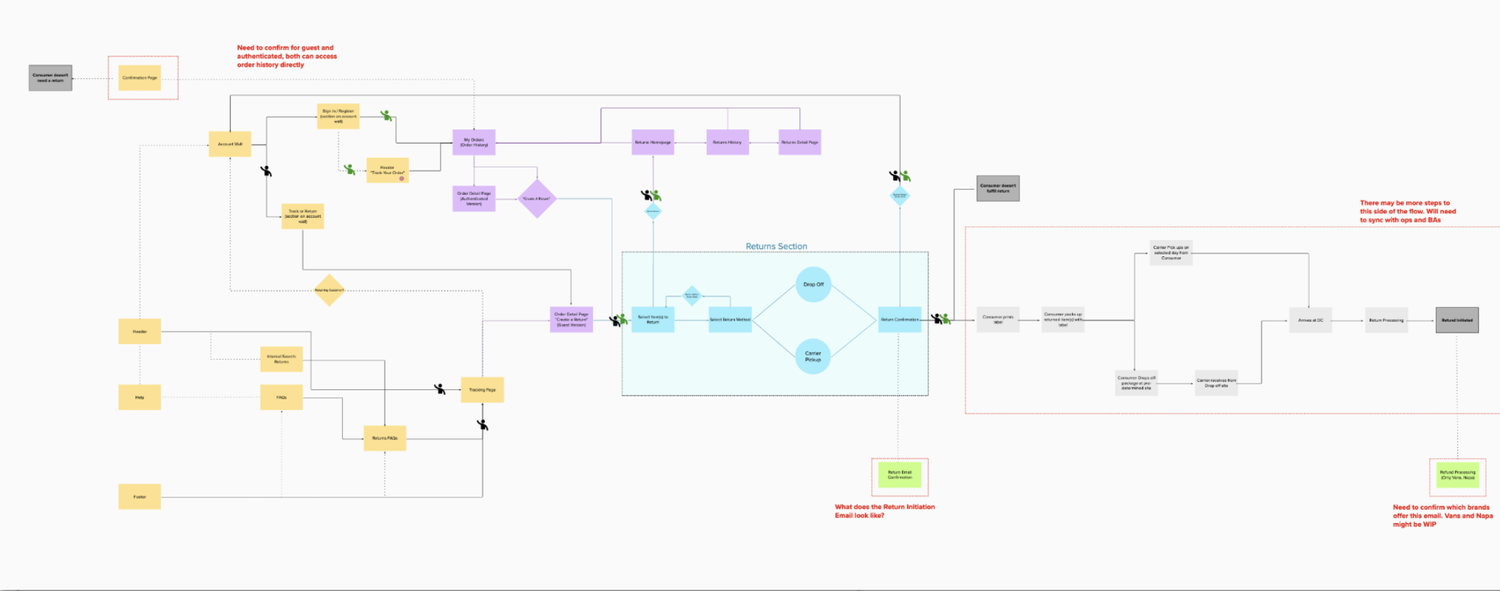

VI. JOURNEY MAPS

Defining the problem

With all of the research documentation we now had, it felt important to compile all of this work into a visualization of a journey map. This map was intended to, as accurately as possible, represent consumer actions, touch points, sentiment, pain points, measurable KPI’s, and opportunities that are causing us to be behind competitors. The purpose of this map is to compliment our existing service design representing the logistics and supply chain side of a returns initiative. So essentially, we’re attempting to match logistics with user sentiment to paint the full picture and anchor strategic

decisions behind.

We mapped out 5 steps: purchasing, receiving an order, initiating a return, track return, and receiving refund. Through the sentiment graph, we were able to understand where to prioritize our efforts and what parts of the experience needed to be improved.

When presenting our findings to product leadership, there were six key pain points we wanted to emphasize our research showing, which was:

Return instructions were not easily accessible, readable, or scannable.

Since our brands had no online portals, customers were confused as the how they would start a return process (which is usually done online in their order history/account)

Brand didn’t offer a way for guest users to return. There was no guest portal was meant that this was an not an inclusive experience.

Because there was no online portal, this meant that our users couldn’t easily access a return label.

We didn’t offer a tracking capability. This leaves our customers worried about their package being lost or damaged.

Lack of tracking capability paired with extremely delayed refund timelines caused a lot of stress for our customers.

Clarifying pain points

VII. IDEATION

Potential solutions

After understanding the full scope of the problem, we defined some opportunities, ranging from low-hanging fruit to long-term goals, for each stage of the customer journey. With these potential solutions, we began wireframing what they could look like in the long term to help define the vision.

Placing an order: Expand the entry points to return policy (email footer, website footer, making it accessible through search). Making a more visual & scannable return policy.

Initiating a return. Implement online portal for guest and member (to track and start a return). Implement printerless return label s(QR code).

Waiting for a refund. Implement tracking capability. More frequent email communication on delays and milestones.

VIII. IMPACT

Deliverables

With collaboration from the CX and research team, we were able to provide impact and a direction to the business for how we can improve our returns experience. These included…

Now having a definition of a “frictionless” return experience which was unanimous regardless of brand loyalty

or regionOur largest pain points in the current VF experience are related to return initiation and refund delays, and with our research artifacts we paved the way for a more accessible and competitive experience

We identified several inconsistencies within messaging and timely communication with customers across brands which are impacting customer perception, which we now had budget to solve

IX. TESTIMONIALS

Quotes from teammates

“You killed it! The hard work paid off. This work will set us up for years.”

“I LOVE how you synthesized all the data in the Mural board. So many great insights and I’m seeing the same patterns and trends from my competitor studies too, which is exciting!”